In today's fast-paced world, creating an AI agent is simpler than ever. With just a few lines of code or an easy-to-use tool, anyone can build a system that can communicate, plan, and take action. However, many startups are now facing a "reckoning." After years of hype, founders are discovering that many AI projects are just impressive demos that don't actually make money. This often happens because of "pilot paralysis"—building endless prototypes that never reach actual customers. These prototypes are usually too complicated or don't solve a clear problem.

To succeed in the autonomous economy, startup founders need to change their approach. Instead of just "building technology," they must focus on "orchestrating outcomes." This starts with a simple, focused "Build" phase centered on one thing: the One-Page Agent Hypothesis.

The 2026 Inflection Point: Breaking Free from Limited Pilots

The AI landscape has transformed. We've moved from simple chatbots that just respond to questions to advanced systems that work towards specific goals. Early in 2026, we saw a major shift as businesses started using AI agents in large-scale, organized production systems instead of just small, isolated tests. Yet, many smaller teams are still stuck. Reports show that while many companies have tried using agent systems, only about a quarter have successfully integrated them into their daily operations.

The main hurdle isn't usually the technology itself. Instead, it's the lack of a clear business reason for the AI and a tendency to chase complex features before getting the basics right. Startups that thrive treat innovation as a structured process. They focus on how the system is built and managed, rather than just running one-off experiments. This means you need to stop asking "What can this AI do?" and start asking "What specific problem will this solve for my user?".

The Fallacy of the "God Agent"

A common and costly mistake in 2026 is trying to create a "God Agent." This is a single AI system designed to handle every single task within a company. This includes everything from sales and customer support to billing and technical help. While it might seem efficient to have one central "brain" managing all these tasks, this approach is a major reason why projects fail.

When you try to build a single, all-encompassing agent, several technical and financial problems can arise:

Reasoning Degradation: When you add too many instructions to a single agent, the main instruction set, called the "system prompt," becomes overwhelmingly large. The agent then struggles to switch between different tasks and its ability to think clearly decreases. Imagine trying to remember hundreds of different rules for every little thing you do—it becomes impossible to focus on any single rule effectively.

Prompt Bloat: A massive prompt means you're paying for a huge amount of data with every single message the AI sends, even for very simple interactions. For example, if the AI needs to say "hello," it might still have to process thousands of lines of code and instructions. This drives up costs unnecessarily, especially for common and straightforward tasks.

Hallucination Spikes: When system prompts are large and disorganized, the AI is more likely to "hallucinate." This means it might invent information, like fake return policies or made-up customer data. It happens because the AI is overwhelmed by irrelevant details and struggles to find the correct information, leading it to fill in the gaps with fabricated content.

Lean startups avoid these issues by using a "division of labor." Instead of one massive AI bot, they create a "swarm of agents," where each agent is a specialist. For instance, one agent might be responsible for figuring out what a user wants (a "Triage Agent"). Once it understands the request, it can then pass the task to another specialized agent, like a "Billing Agent" or a "Sales Agent." This modular design makes the system more organized and easier to track, which is essential for professional business operations.

The Problem-First Philosophy

Lean principles teach us to always put the "problem first and the solution second." Technology itself often provides only about 20% of the value in a project. The remaining 80% comes from rethinking and redesigning how work is done. This allows AI agents to handle routine tasks, freeing up people to focus on important strategic work.

If you create AI solutions based on the technology itself rather than on a genuine human problem, you end up with "redundant solutions" that nobody uses. For example, one company spent a year developing a complex AI assistant for restaurants. This assistant was designed to find "fancy dinner places." However, they discovered it didn't solve a real problem for users. The project was ultimately abandoned because they started with cool features instead of first confirming if there was a real need for the service.

Before you write any AI instructions, you must thoroughly understand the problem and figure out its value. Ask yourself these key questions:

Is this a task that a human currently spends more than an hour each day doing?

Would a 40% reduction in how long it takes to complete this task significantly improve our company's profits?

Is the data needed for this task clean and organized enough for an AI to understand and use it correctly?

The One-Page Agent Hypothesis

A "One-Page Agent Hypothesis" is a concise document that keeps your AI experiment focused. It's not a detailed technical plan. Instead, it's a business plan for a short testing period. Its main goal is to find "directional truth"—enough evidence to decide whether to continue building the AI solution.

Here are the essential parts of your hypothesis:

The Goal: Clearly state one specific business outcome you want to achieve. For example, your goal might be to "automate the classification of 90% of incoming customer leads." This makes it obvious what success looks like.

The Audience: Precisely identify who will use the agent. Knowing your audience prevents "agent sprawl," where different teams create their own AI agents without any coordination, leading to confusion and duplicated efforts.

The Metric (North Star): Choose the single most important measure of success for the agent. This is often "Decision Velocity"—how much faster your team can make smart, data-driven decisions. This metric helps you stay focused on the most impactful aspect of the AI's performance.

The Constraint: Set strict limitations for the agent's performance. For example, the agent must provide a response in under three seconds. These boundaries prevent user frustration and ensure the AI operates within acceptable limits.

The Timeline: Commit to a short, focused testing period, typically a 7-day sprint. This forces you to prove the core value of your agent quickly, avoiding lengthy development cycles without clear results.

Choosing Your Model Tier: The "Whiteboard Test"

In 2026, the AI model market is divided into three main categories: Small Language Models (SLMs), Large Language Models (LLMs), and Large Reasoning Models (LRMs). A smart founder chooses the smallest and most affordable model that can effectively complete the task. Using an overly powerful and expensive model for a simple job is a common way startups waste money.

The most frequent cause of higher-than-expected costs comes from using a "brain surgeon" to do a simple task, like taking a temperature. Many startups use expensive models like GPT-4 to handle basic requests, which can increase costs ten times or even more. It's like using a supercomputer to do simple math problems.

To select the right model tier, use the "Whiteboard Test," which helps you match the task complexity to the model's capabilities:

LRMs: These are for tasks requiring deep logical thinking, complex step-by-step planning, or advanced coding. If a human would need a whiteboard and significant focused thinking time (around five minutes) to solve the problem, an LRM is probably necessary. Be aware that LRMs can be very expensive. They use "hidden reasoning tokens" that you are billed for, and they often have a noticeable delay of 30 to 60 seconds per response.

SLMs: These models are best for highly specialized fields like healthcare or finance, where precision on smaller, specific datasets is critical. They are an excellent choice for simple tasks like sorting user requests or basic text classification because they are both fast and inexpensive.

The Hierarchy of Workflow Patterns

Your very first "Minimum Viable Agent" (MVA) should be as simple as possible. Building complexity should be a last resort, not the starting point. We can think of AI workflows as a series of steps, increasing in difficulty:

Routing: This is the simplest level. The AI's job is to understand an incoming request and send it to the correct place or person. It's like a receptionist directing calls.

RAG (Retrieval-Augmented Generation): This involves the AI pulling specific facts from a database or knowledge base. This helps ensure the AI's answers are based on real information and prevents it from making things up. It grounds the AI's responses in verified data.

Function Calling: Here, the AI can use specific tools or software to perform an action. This could be anything from using a calculator to performing a database search. It allows the AI to interact with other systems to get things done.

Full Autonomy: This is the most advanced level, where the AI can plan its own multi-step process to achieve a goal without human intervention. It figures out the sequence of actions needed to complete a task.

Most startups find success by focusing on RAG or Function Calling for their initial AI agent. This approach allows the agent to provide "verified information" before responding, which significantly reduces the risk of making costly legal or financial errors. Starting with these simpler patterns helps build trust and reliability.

Human-in-the-Loop (HITL) as Risk Management

In 2026, fully autonomous AI agents still carry significant risks. AI "hallucinations" cost businesses billions of dollars each year. Additionally, malicious actors can "hijack" AI systems through methods like prompt injection, leading to security breaches. Because of these dangers, lean startups commonly use Human-in-the-Loop (HITL) systems.

HITL is not a sign of a weak AI. Instead, it acts as a crucial safety feature. It ensures that important actions, such as transferring money or sending messages to the public, are reviewed by a human before they are finalized. By clearly defining which tasks humans will handle and which ones agents will manage, you create a system that is both efficient and secure. Successful organizations establish clear pathways for escalating tasks. If an agent gets stuck or encounters a problem, it can transfer the task to a human before a mistake occurs.

Setting Hard Constraints

Before you start writing any code or instructions for your AI agent, you must set clear limits and rules for its operation. If you fail to do this, you could end up facing "runaway costs" or critical technical failures. These constraints help ensure your AI operates predictably and within your budget and performance goals.

Latency: If your system is too slow, users will quickly become frustrated and abandon the agent. For example, using "cold start" servers can add a delay of three seconds or more to each response. This delay might cause external platforms, like Slack, to time out. When this happens, they might send duplicate requests, increasing workload and potentially causing errors.

Budget: Every piece of information processed by an AI costs money, and "context" (the conversation history and instructions) is a major cost factor. If your agent keeps the entire conversation history without summarizing it, your "token counts"—the units of text processed—will skyrocket. This leads to unexpectedly high expenses.

Tools: Provide your agent with only the "minimum viable toolset." This means starting with just the most essential tools needed for its task. Giving the agent too many tools can cause it to get stuck in endless loops or make conflicting decisions, hindering its effectiveness.

The 7-Day Build Sprint

The main goal for the first week of development is to build a "Minimum Viable Agent" (MVA). This initial version should be robust enough to be tested with a small group of 5 to 10 real users. This rapid testing phase is crucial for gathering early feedback and validating your core assumptions.

Focus on the Outcome

Building AI agents in 2026 requires discipline. It's essential to resist the temptation to create a complex "God Agent." Instead, concentrate on achieving one specific, measurable goal. By starting with a clear One-Page Hypothesis and committing to a 7-day build sprint, you can effectively validate your AI idea before spending valuable startup resources.

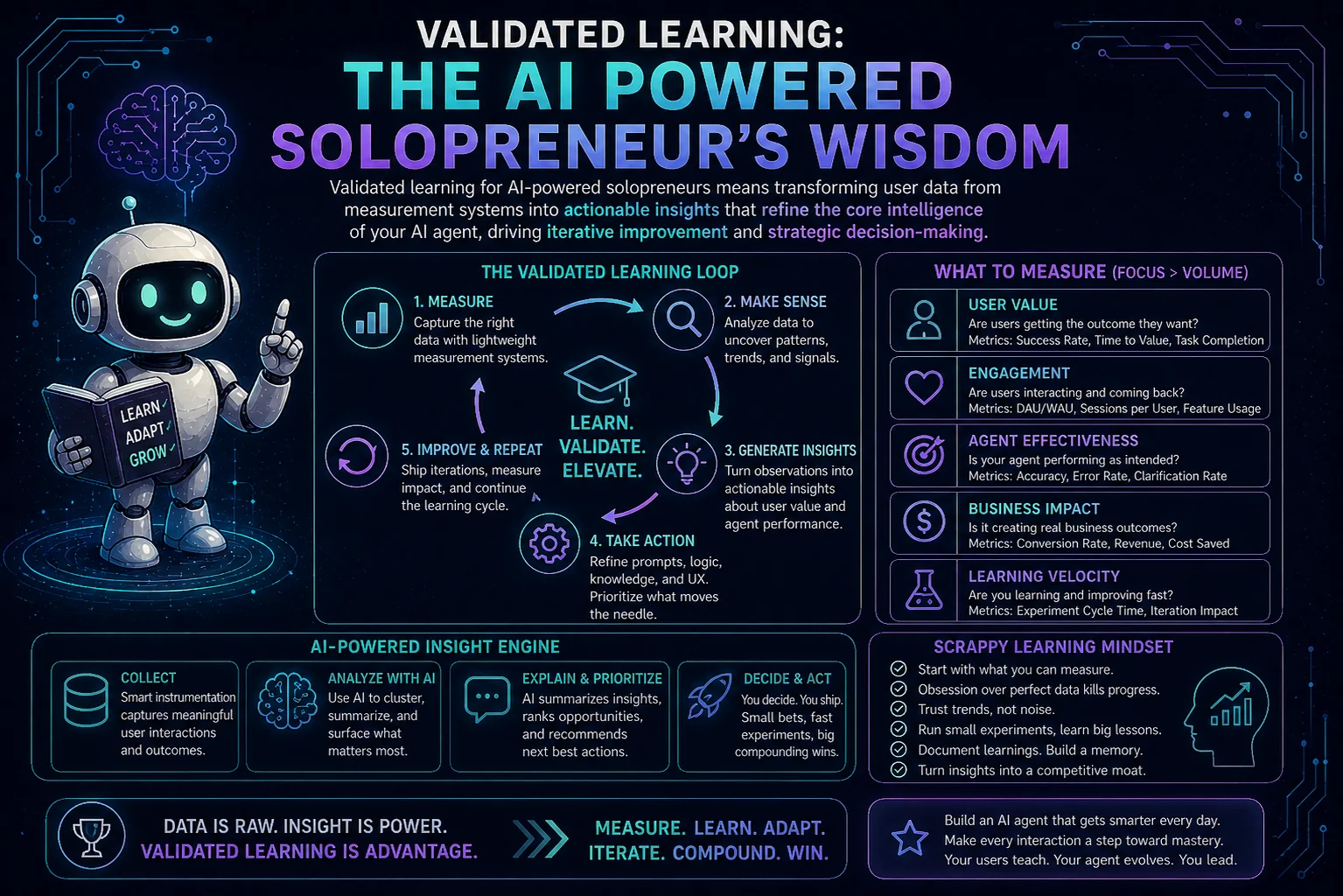

True innovation is not just about the technology you acquire; it's about the systems you create to measure, manage, and scale that technology. In the next part of this series, we will explore how to measure the "directional truth" of your MVA and identify the key metrics that truly drive business growth.

No comments yet

Be the first to share your thoughts on this article!