In the first post of this series, we talked about the Build phase—picking a "Claw" variant and shipping a single, vertical Skill using local-first architecture and the latest 2026 model weights.

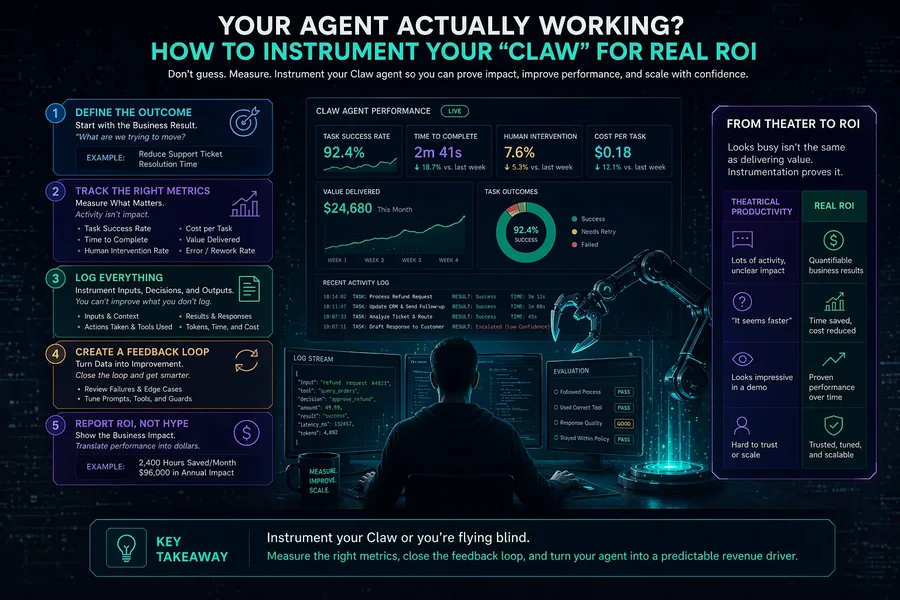

But here is the hard truth for April 2026: Most AI "automation" in early-stage startups is actually theatrical productivity. You spend four hours tweaking a complex GPT-5.x Contemplation prompt to save four minutes of manual data entry. You feel busy, your terminal is full of "thinking" logs, but your business isn't moving.

In the Lean Startup framework, the Measure phase is where we kill the theater. If you’ve deployed a Claw-style agent—whether it’s OpenClaw on your laptop or ZeroClaw on a remote server—you need to move past "vibes." You need to instrument your agent to tell you three things:

- Is it saving more time than it costs to maintain (including your "babysitting" time)?

- What is the Blast Radius when it fails?

- Where is the friction in the Human-in-the-Loop (HITL) process?

Moving Beyond the "Vibe Check"

When you’re a solopreneur, it’s easy to say, "Yeah, the agent seems helpful." But "helpful" doesn't scale. To measure a Claw agent properly, you have to treat it like a junior employee. You wouldn't let a junior hire work for six months without a performance review; don't let your agent run without a dashboard.

The "Claw Metric" Stack

Because Claw variants are local-first and skill-driven, you have access to raw data that SaaS-based "Black Box" agents hide from you. You should be tracking:

- Success Rate per Skill: Out of 10 times the agent tried to "Update the CRM," how many times did the API return a 200 OK without you intervening?

- Token Efficiency: Are you using an expensive Claude 4.x Opus call for a task that a local Gemma-4 27B or Mistral-Small-3 could do via OllamaClaw for $0?

- Manual Intervention Rate: How often did the agent stop and ask you for help? If your "autonomous" agent is pestering you every 5 minutes, it’s just a high-maintenance UI.

Measuring Workflow Friction with "Lobster"

One of the most powerful tools in the Claw family is Lobster, the visual workflow shell. In the Build phase, Lobster helps you chain skills together. In the Measure phase, Lobster becomes your X-ray machine.

When you look at a workflow in Lobster, look for the Red Boxes. These are your failure points.

⚠️ Identify Failure Points: When looking at a workflow in Lobster, identify the Red Boxes. These represent your failure points. Is it a model hallucination, an API timeout, or a logic error?

- The Approval Bottleneck: If your workflow has a "Human Approval" step that sits idle for four hours every day while you're in deep work, your agent isn't the problem—your process is.

- The Silent Failure: Use Lobster’s logging to find where the agent "thinks" it succeeded but actually hallucinated a result. This is common with 2026 Extended Thinking models that can sometimes talk themselves into a wrong conclusion.

✅ Pivot the Skill: If a specific step in your Lobster flow has a failure rate higher than 20%, stop trying to fix the prompt. Pivot the Skill. Maybe the agent shouldn't be "writing the email"; maybe it should only be "extracting the intent from the last 5 LinkedIn posts." Shrink the task until the measurement turns green.

Measuring the "Blast Radius" with IronClaw

In a startup, "moving fast" is good; "breaking things" is only okay if those things aren't your customer database or your compliance standing. This is where IronClaw or SafeClaw come in. These variants are designed for sandboxed execution, but for a founder, they are also measurement tools for risk.

The "Audit Log" as a Learning Tool

IronClaw generates strict audit logs of every action the agent takes. As a founder, you should review these once a week to measure:

- Measure "Intent vs. Action": Did the agent try to delete a file or hit an endpoint it wasn't supposed to touch?

- Measure "Permission Creep": Are you giving the agent "Admin" access when it only needs "Read" access to do its job?

By measuring the Risk-to-Value ratio, you can decide if an agent is ready to move from your "personal experiment" to a "customer-facing feature." If the Blast Radius is too high, your Measure phase has just saved you from a PR disaster.

The ROI Equation: Time-to-Learning (TTL)

For a solopreneur in April 2026, the most important metric is Time-to-Learning (TTL).

- How long does it take from the moment you have an idea for an automation to the moment you know, with data, if it’s actually feasible?

If you use a heavyweight enterprise agent, your TTL might be two weeks. If you use Nanobot or ZeroClaw running local Gemma 3 or Phi-4 weights, your TTL should be two hours.

| Variant | Build Time | Measure Point | Success Signal |

|---|---|---|---|

| OpenClaw | 1 hour | Skill reliability | 80%+ success on complex logic |

| ZeroClaw | 30 mins | Execution speed | <300ms response time on local Pi 5 |

| Nanobot | 20 mins | Resource usage | Runs on an ESP32/Edge without crashing |

💡 Key Insight: For a solopreneur, the most crucial metric is Time-to-Learning (TTL). This measures how long it takes from having an automation idea to knowing if it's feasible.

Measure this: Keep a simple spreadsheet.

- Idea: "Auto-reply to X DMs."

- Tool: DroidClaw.

- Time to Build: 3 hours.

- Measurement: Only 1 in 10 replies were actually useful due to 2026 bot-detection filters.

- Learning: Pivot. The agent shouldn't reply; it should just summarize DMs into a daily "Priority" briefing.

Instrumenting for "Cost-per-Task"

Early-stage founders often ignore API costs because they have "free credits" from OpenAI or Google. This is a mistake. You need to measure your Unit Economics now so you don't build a business model that is structurally unprofitable when the credits dry up.

If you are using NemoClaw or a cloud-native variant, your costs can spike quickly with high-reasoning models.

- Measure your "Context Window" usage. Are you sending a 100-page PDF to a GPT-5.x model for a 1-sentence summary? That’s burning money.

- Experiment with "Small Models." Can you swap a high-cost model for a local one? Use the Measure phase to run a head-to-head test: Give the same 10 tasks to Claude 4.x and a local Gemma 4-4EB running on OllamaClaw. If the local model gets 8/10 right for $0, you’ve just found your path to sustainable scale.

The "Stop/Go" Dashboard

At the end of your first week of measurement, you should have enough data to fill out this "Stop/Go" report:

If the answer to any of these is "No," you have reached a Pivot Point. You don't keep "building" the same thing. You change the architecture. You move from a "General Claw" to a "Specialized Variant" like VisionClaw or DroidClaw.

Summary: Don't Just Automate, Instrument

Measurement is the bridge between a "side project" and a "scalable product."

If you are a founder using Claw agents, your competitive advantage isn't that you have "AI"—everyone has that in 2026. Your advantage is that you have a tight feedback loop. By using tools like Lobster for workflow visibility, IronClaw for risk management, and OllamaClaw for cost control, you are gathering the "intelligence" needed to win.

✅ Measurement Goal: Pick one agent skill you built. Track every time it fails today. By Friday, identify the one reason it fails most often. Is it a bad prompt? A slow API? A lack of context? Find that one reason, and you’re ready for Post 3: The Learn Phase.

What's Next?

Data is useless if you don't act on it. In the final post of this series, we will talk about the Learn phase: How to use your measurements to decide when to Molt (pivot) your agent or double down and scale to an enterprise-grade stack like NemoClaw.

No comments yet

Be the first to share your thoughts on this article!