You have an AI agent idea. It's brilliant, it's groundbreaking, and you're eager to bring it to life. But as a solo founder or someone leading a very early-stage startup, you know that time is scarce, cash is even scarcer, and every single decision matters immensely. It's tempting to dive straight into building, to magically manifest your vision through endless lines of code and prompt engineering. However, the most efficient and effective way to launch a successful startup doesn't start with writing code; it begins with gathering proof. It starts with Measurement.

In the fast-paced world of AI agents and automated systems, it's easy to get caught up in the technical wonders of Large Language Models (LLMs). But for founders, especially those operating with limited funds, the real marvel is creating something that people genuinely need and are willing to pay for. This is precisely where the "Measure" step of the lean startup cycle becomes your most valuable asset. It's not about creating endless reports or staring at Google Analytics dashboards for hours. Instead, it's about collecting the right signals to confirm or deny your most important assumptions about user behavior and value delivery.

"The most efficient and effective way to launch a successful startup doesn't start with writing code; it begins with gathering proof. It starts with Measurement."

As a solopreneur, you are the pilot, the navigator, and the engine. If you fly blind, you will eventually crash. Measurement is your instrument panel. It guides your limited resources to where they will have the greatest impact. This focused strategy ensures you learn quickly and efficiently, preventing you from wasting effort on features or products that don't connect with your users. In the world of AI, where development and API costs can become expensive very quickly, measuring early is the best way to protect your runway and ensure that every token spent contributes to a validated business goal.

Pinpointing Your North Star: Essential Metrics for Early-Stage AI Agents

Before you can measure anything, you must first identify what to measure. For a startup focused on AI agents, particularly in its early days, your main goal should be to gather validation metrics that prove your core idea. For now, forget about vanity metrics like total sign-ups or social media followers. These numbers might feel good, but they don't tell you if your product works. You need to know if your agent is truly solving a problem and if users are engaging with it in a meaningful, repeated way.

As Eric Ries famously explained in "The Lean Startup," the goal isn't just to build a product, but to build a lasting business by learning what customers truly want. For early AI agents, where the "intelligence" of the product is often black-boxed and unpredictable, this learning process is absolutely vital. Consider these key categories of metrics, each designed to give you a clear understanding of your progress.

These metrics reveal if the problem you're aiming to solve is real and significant. This aligns with Steve Blank's "Customer Development" approach—understanding the customer's pain before writing the solution's code. To gauge this, you can track the Qualitative Feedback Capture Rate. This measures how many people you pitch your concept to are willing to discuss their current workflows in a call. A high rate, over 30%, signals that you've hit a nerve and the problem is significant enough for people to engage. You can also use a Problem Intensity Score. When describing the problem, ask users to rate its impact on a scale of 1-10. If the average is below 7, people might not be desperate enough to pay for an automated solution yet. Lastly, pay attention to the Frequency of Mention in open-ended conversations. How often does the user use the exact keywords your agent targets? For instance, if you're building a "Meeting Summarizer," how often do they complain about "missing action items"?

These metrics indicate whether your specific AI agent is seen as the right answer to that validated problem. During early demonstrations of a mock-up or a "Wizard of Oz" prototype (where you do the work manually), aim for "definitely yes" responses for the "Would You Use This?" Score. "Maybe" is usually a polite "No." You can also assess Feature Appeal by showing users a list of potential agent capabilities. Which one do they pick first? This tells you exactly what to build in your V1 and what to delete. For an MVP, the Early Engagement Rate is crucial. What percentage of users who start a main task (like "Connect my Email") actually receive the final output? A 100% completion rate here is mandatory before scaling.

These metrics offer clues about long-term success and whether your agent is "sticky." For your first 10 testers, how many come back a second time without an email reminder? This Repeat Usage Rate is the ultimate "truth" metric. A metric unique to AI is the Intervention Rate. How often did you (the founder) have to "fix" the agent's output before the user saw it? Reducing this rate over time is the path to a scalable business. Finally, simply ask users, "How much effort did it take to get what you needed?" This User Effort Score is important because AI is supposed to reduce effort. If the prompt engineering required by the user is too high, the effort score will be poor.

The "Mom Test" for AI Agents: Getting Unbiased Proof

A common pitfall for solo founders is "False Validation." You show your agent to a friend, they say it's "cool," and you spend three months building it. This is a trap. To get real measurement, you need to follow the principles found in Rob Fitzpatrick’s "The Mom Test." This means talking about the user's life and problems, not your agent.

When measuring problem intensity, never ask, "Do you think this agent is a good idea?" Instead, ask: "Walk me through the last time you had to do [Task X]. How long did it take? What was the most annoying part?" The data you gather here is purely qualitative, but it is the most important measurement you will ever take. If the user can't remember the last time they did the task, or if they haven't spent money or time trying to fix it already, your agent idea is likely a "vitamin" (nice to have) rather than a "painkiller" (must have).

In the AI context, this is especially crucial. Many tasks can be automated by AI, but that doesn't mean they should be. By measuring the existing friction in a user's workflow, you can predict the "Aha! Moment" for your agent. If the user spends 4 hours a week on a task and your agent can do it in 4 seconds, the delta is high enough to build a business. If the delta is only 10 minutes, you might struggle to charge for it.

Building Your Measurement Arsenal: Frameworks and Tools

You don't need a complex enterprise analytics system or a dedicated data science team. For solo founders, a "lean" measurement stack is about speed and clarity. The goal is to create a "learning organization" of one, where data guides every update to your system prompt.

Measurement Frameworks for Early Stage:

The "Problem/Solution Fit" Framework, derived from Steve Blank, involves a structured approach to validating the market. First, define the problem in one sentence. Then, identify the target user, such as "Growth leads at Series A startups." Next, measure how they solve it today through competitor analysis. Finally, measure the friction in the current solution.

The "One Metric That Matters" (OMTM) approach is also valuable. Instead of watching 20 charts, pick one critical metric. For a productivity agent, it might be "Successful Automations per User per Week." If that number goes up, you're winning. If it stays flat, your feature updates aren't working.

Essential Tools for the Solo Founder:

For qualitative data, use Typeform to filter for "Problem Intensity" and Calendly to automatically book interviews with those who score highest. For quantitative tracking, PostHog or Amplitude have generous free tiers. Use them to track "Events" like `agent_started`, `output_copied`, or `feedback_positive`. For LLM analytics, LangSmith or Helicone are specific to AI agents. They allow you to measure token costs, latency (how long users wait), and trace where the agent's logic failed. Don't underestimate the power of "The Founder's Spreadsheet." Log every user conversation, every manual intervention, and every "bug" reported. Patterns emerge in the rows of a spreadsheet that are invisible in a fancy dashboard.

Turning Data into Decisions: Analytics Setup and Data Collection

Setting up your analytics doesn't have to be a multi-week engineering project. In a lean startup, you "instrument" only what is necessary to answer your current hypothesis. If you aren't a coder, manual tracking is not a failure—it's a high-fidelity way to learn.

Instrumentation for Non-Technical Founders:

If your agent lives in a simple tool like Zapier or a "no-code" wrapper, you can still collect data. Connect your output to a Google Sheet automatically. Every time the agent runs, it logs the prompt, the result, and the user's ID. Once a day, review the sheet. This is "manual instrumentation." You are measuring the agent's quality with your own eyes, which is far more valuable in the early days than a generic "uptime" chart.

Advanced Data Collection Methods:

Funnel Analysis maps out the steps to value. For example: Step 1: User lands on page. Step 2: User enters prompt. Step 3: Agent generates draft. Step 4: User clicks "Approve." If 80% of users drop off at Step 3, your agent's quality is the problem. If they drop off at Step 2, your landing page copy is confusing. Funnels turn vague "it's not working" feelings into specific "fix the prompt" actions.

Cohort Analysis is the best way to test model updates. Group users who used Version 1.0 (e.g., GPT-4o-mini) and Version 1.1 (e.g., GPT-4o). Did the retention rate improve? Cohort analysis prevents you from being fooled by a "spike" in new signups that hides the fact that old users are leaving. Intercept Surveys are also effective. When an agent finishes a task, show a single question: "On a scale of 1-5, how much time did this save you?" This "in-the-moment" measurement is 10x more accurate than an email survey sent three days later.

The Feedback Loop: Measurement as Your Navigation System

For solo founders and early-stage teams, your resources are limited. Every dollar spent and every hour invested must be a strategic move. The "Measure" phase, when approached with a lean, agent-focused mindset, is your navigation system. It provides the real-time feedback needed to adjust your course, to avoid building something nobody wants, and to focus your efforts on achieving genuine product-market fit.

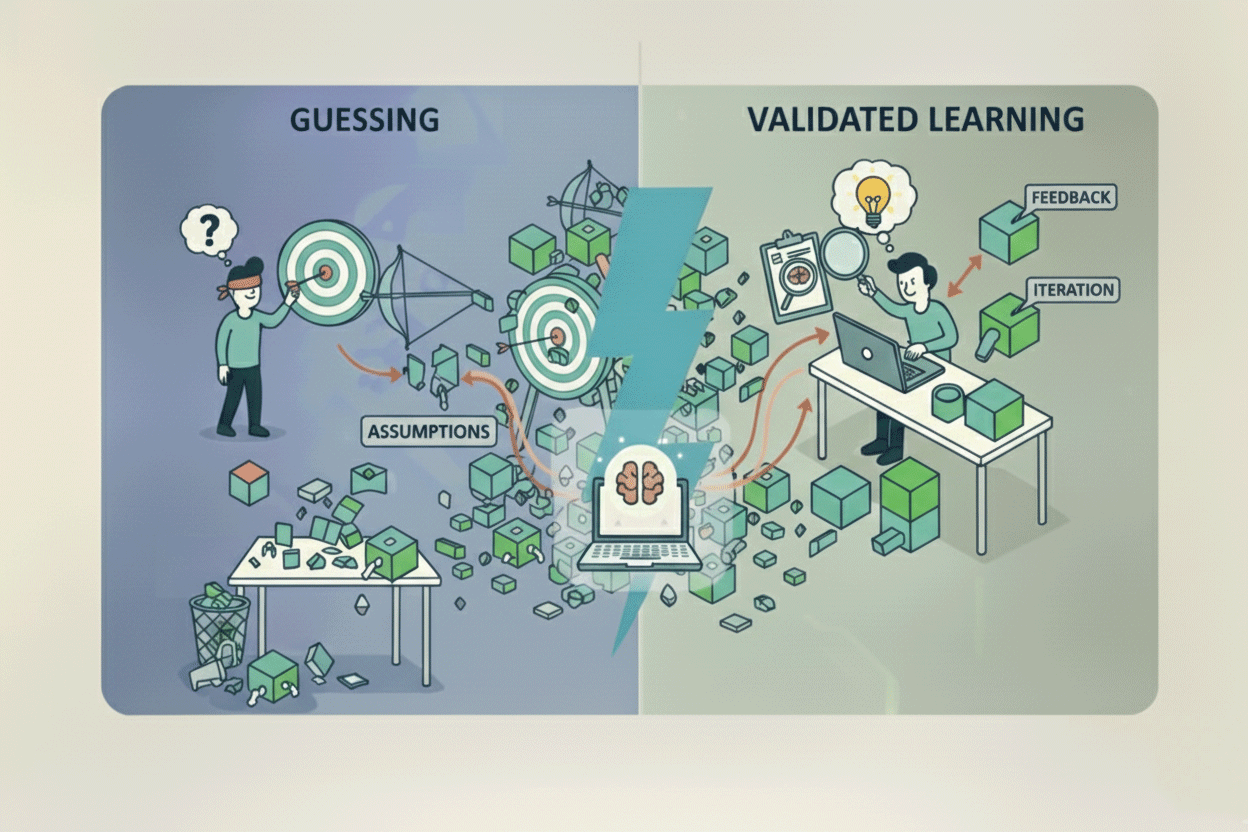

By focusing on the correct metrics, setting up simple yet effective measurement frameworks, and carefully collecting and analyzing data, you change uncertainty into clarity. You move from guessing what your customers need to truly knowing. This evidence-based approach isn't about being perfect; it's about making steady progress. It's about building proof, one validated assumption at a time.

In the AI agent era, the technology will change every week. Models will get faster, cheaper, and smarter. But the core principles of lean measurement remain the same. The founders who win are not those with the most complex codebases, but those who close the "Learning Loop" the fastest. Gather your data, trust the signals, and build the future—one measured step at a time.

No comments yet

Be the first to share your thoughts on this article!